Analytics Tools for Startups: Build a Stack or Adopt a Platform?

Search "analytics tools for startups" and you'll get a wall of product analytics recommendations — Mixpanel, Amplitude, PostHog, GA4. Those are fine tools for tracking what users do inside your app. But if you're the person who just got tapped to "figure out the data situation," you already know that tracking button clicks isn't the problem. The problem is connecting HubSpot, Stripe, your production database, and three marketing platforms into a single view your CEO can actually use.

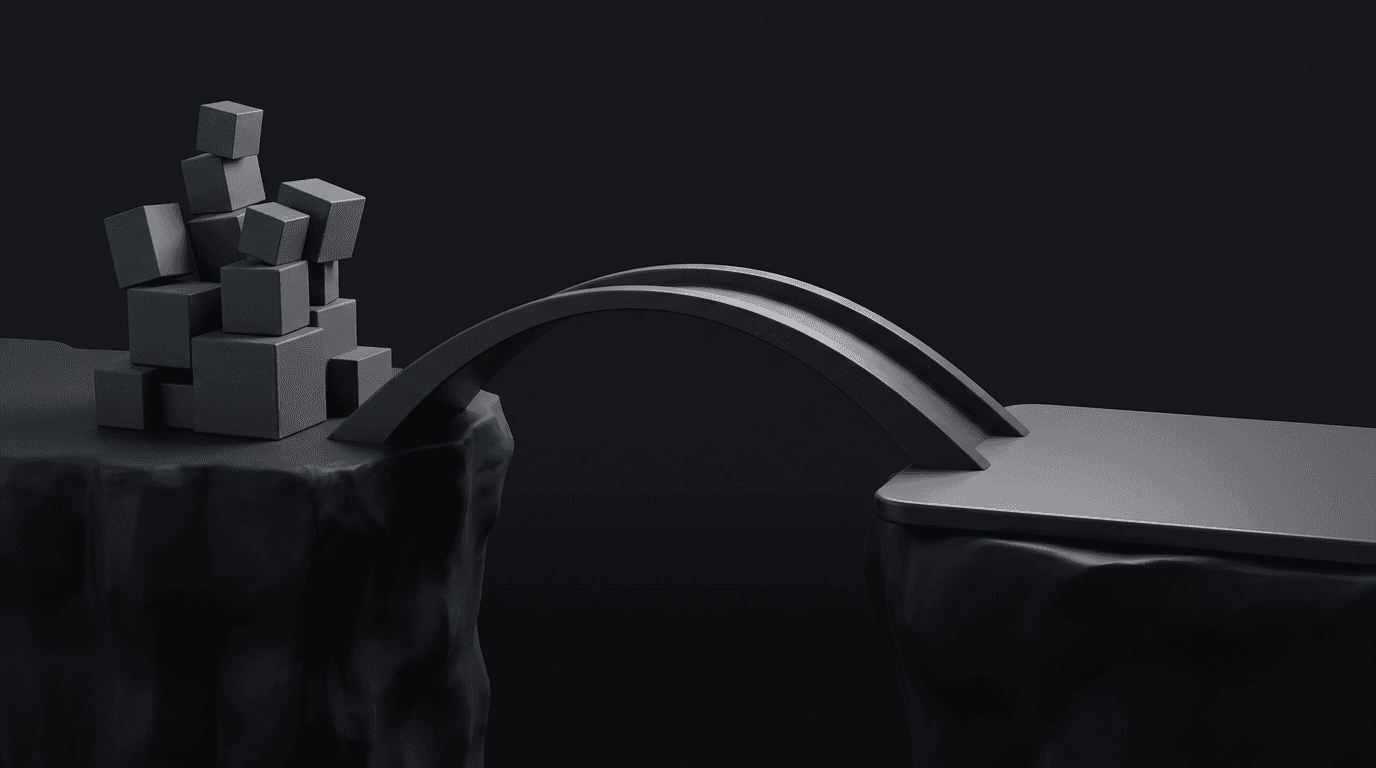

That's a fundamentally different problem. And the answer isn't "pick one of these ten tools" — it's deciding whether to assemble a multi-tool data stack (here's how those builds usually go wrong) or adopt a platform that handles the full pipeline.

What this guide covers:

- The three categories of analytics startups actually need (not just product analytics)

- Why product analytics tools leave a gap for revenue and operational data

- The real tradeoff between assembling a data stack and adopting an integrated platform

- What the tool landscape actually costs at startup scale (run your own numbers)

- A practical evaluation checklist for the person building the recommendation

Three Kinds of Analytics Startups Actually Need

Most "analytics tools for startups" guides treat analytics as one thing. It's not. Startups hit three distinct analytics needs as they grow, and each one draws from different data sources with different tooling requirements.

1. Product analytics — what users do inside your app. Funnels, retention curves, feature adoption, session recordings. Data comes from instrumented events in your product code. This is the category Mixpanel, Amplitude, and PostHog cover well.

2. Revenue and financial analytics — the money side. MRR, churn, LTV, billing reconciliation, cohort analysis by acquisition channel. Data comes from Stripe, payment processors, your CRM, QuickBooks (or similar GL), and your product database. No product analytics tool covers this natively (your Stripe billing data doesn't live in Mixpanel).

3. Operational analytics — the rest of the business. Pipeline velocity, support resolution times, marketing attribution, customer health scores. Data lives in HubSpot, Salesforce, Intercom, ad platforms, and a dozen other SaaS tools your team uses daily.

Most startups searching for "analytics tools" need all three. But the search results only cover #1. The gap between product analytics and business analytics is where the real architectural decision lives — and it's the one nobody talks about.

Why Product Analytics Tools Don't Solve the Whole Problem

Product analytics tools are built for instrumented event data. You drop an SDK into your app, define events ("user clicked checkout," "user viewed pricing page"), and the tool tracks, visualizes, and analyzes those events. That model works brilliantly for product teams.

It breaks down when you need to answer questions like:

- "What's our MRR by acquisition channel?" (requires Stripe + CRM + marketing data)

- "Which customer segments have the highest support costs?" (requires billing + Intercom data)

- "How does pipeline velocity differ between inbound and outbound leads?" (requires CRM + marketing automation data)

These questions require pulling data from 5-15 SaaS tools — the average startup uses 25-55 SaaS applications — and combining them into a unified data model. Different architecture, different tools, different skills than event tracking.

| Product Analytics | Business Analytics | |

|---|---|---|

| Data source | Instrumented events in your app | SaaS APIs: CRM, billing, support, marketing |

| Setup | SDK install + event definitions | Connectors + storage + modeling + BI |

| Who uses it | Product team | Entire company |

| Example question | "What's our Day-7 retention?" | "What's our MRR by cohort and channel?" |

| Typical tools | Mixpanel, Amplitude, PostHog | Fivetran + Snowflake + Looker, or an integrated platform |

A note on PostHog: PostHog has expanded significantly beyond product analytics — it now offers a managed DuckDB warehouse, 120+ connectors (including Stripe and HubSpot), BI dashboards, and a SQL editor. It's the closest a product analytics tool has come to becoming a full platform. But its architecture remains developer-oriented and product-data-first. It lacks a governed semantic layer (consistent metric definitions enforced across all queries and AI), and its pricing and interface aren't built for the non-technical stakeholders who need to self-serve on revenue and ops data.

The Two Paths for Business Analytics

Once you need analytics beyond product events, you're choosing between two fundamentally different approaches.

Path 1: Assemble a modern data stack

You pick a tool for each layer: an ingestion tool to pull data from your SaaS apps (Fivetran, Airbyte), a cloud warehouse to store it (Snowflake, BigQuery), a transformation tool to model it (dbt), and a BI tool to visualize it (Looker, Metabase, Looker Studio). This is the approach that dominates data engineering blog posts and conference talks.

What's good about it:

- Maximum flexibility — you can swap any layer independently

- Deep customization for complex data modeling needs

- Large ecosystem with extensive documentation and community support

What's hard about it:

- 4+ vendor contracts, each with its own billing, support, and update cadence

- Integration maintenance — when Fivetran changes a schema, it can break your dbt models, which breaks your Looker dashboards

- Requires someone who can manage it. 62% of data teams report using 10+ tools, and 70% of data leaders say their stacks are too complex.

- Individual tools install in hours; getting to reliable cross-functional analytics takes weeks to months depending on your modeling complexity and team capacity

The 2025 consolidation wave: The "best-of-breed" argument has weakened considerably in the past year. Fivetran acquired Census (reverse ETL) in May 2025, acquired Tobiko Data/SQLMesh (transformation) in September 2025, and announced a merger with dbt Labs in October 2025. Meltano was acquired by Matatika in December 2025. Four major data stack consolidation events in eight months.

You're no longer picking independent best-of-breed tools — you're picking a primary vendor's ecosystem. If the stack vendors themselves are consolidating, the independent best-of-breed argument that justified assembling a stack no longer holds the way it did two years ago. (For a deeper dive on this decision, see our data stack vs. data platform comparison.)

Path 2: Adopt an integrated analytics platform

A single platform handles ingestion, storage, modeling, visualization, and AI. Instead of assembling four tools and making them talk to each other, you connect your data sources and start building dashboards.

What's good about it:

- One vendor, one bill, one interface to learn

- Connections to dashboards in days, not months

- No integration maintenance — the platform owns the entire pipeline

- Non-technical users can self-serve without going through you

What's hard about it:

- Less flexibility for exotic data engineering patterns

- Platform risk — you're dependent on one vendor's roadmap

- Some platforms trade depth for breadth

The "all-in-one" category has matured significantly. When this kind of solution first appeared, early platforms were often lightweight tools that hit their limits at moderate scale — table count caps, silent connector failures, no SQL access. If you've been burned by one of those, that's understandable. Modern integrated platforms are architecturally different. The questions to ask: does the platform store data in open formats you can export? Does it give you SQL access? Does it have actual limits documented? Definite, for example, runs on DuckDB with open data formats — so your data is never locked in — and includes a full semantic layer alongside SQL access and drag-and-drop dashboards.

When Each Path Makes Sense

If you have a data engineer who wants to build, Path 1 is a legitimate choice — the flexibility is real, and with the right team, a well-assembled stack can handle complex transformation needs that a platform might constrain. Go this route when you have dedicated engineering capacity, complex data modeling requirements, or significant existing warehouse investment (especially if you're sitting on startup credits).

Path 2 makes sense when you don't have dedicated data engineering, you need cross-functional analytics across revenue, ops, and product data fast, and you'd rather not manage four vendor contracts. Most startups at the "figure out our data situation" stage — the reader this post is written for — land here.

If you only need product analytics right now (funnels, retention, feature adoption), Mixpanel, Amplitude, or PostHog are excellent choices and this decision framework is overkill. Come back when your CEO asks for a cross-functional view.

What the Two Paths Actually Cost

The larger cost of a data stack isn't the tool subscriptions — it's the coordination. Schema changes in your ingestion layer break your transformation models. Four vendor support channels mean four different escalation paths when something fails before a board meeting. And you'll eventually need observability and orchestration tooling that nobody mentions in the initial pricing conversation.

That said, the tool costs alone are worth examining. Here's what a realistic startup scenario looks like — a 50-person B2B SaaS company connecting 3-5 data sources (CRM, billing, production database).

Stack tools cost (Fivetran + Snowflake + dbt + Metabase)

| Component | Low estimate | Medium estimate | High estimate |

|---|---|---|---|

| Fivetran (ingestion) | $126/mo | $180/mo | $234/mo |

| Snowflake (warehouse) | $312/mo | $1,047/mo | $3,440/mo |

| dbt Cloud (transformation, 3 seats) | $300/mo | $300/mo | $300/mo |

| Metabase Cloud (BI) | $100/mo | $310/mo | $490/mo |

| Tools total | $838/mo | $1,837/mo | $4,464/mo |

These are tools-only costs. Snowflake dominates the variance because it's credit-based — light query usage stays low, but as your team runs more dashboards and queries, compute costs scale fast.

A note on startup credits: Snowflake and Fivetran both offer startup credit programs that can be substantial — sometimes $50K-$100K or more. Those credits are real money, and they'll cover your first year. The question is what happens in year two, when your stack has eighteen months of accumulated complexity, your team has built dependencies on four tools, and the credits are gone. Credits don't reduce the coordination overhead of running the stack — they just defer the bill.

Costs modeled using Definite's Data Stack Cost Calculator. Scenario: B2B SaaS, 50 employees, Fivetran + Snowflake + Metabase.

Integrated platform cost

An integrated platform like Definite starts at $250/mo for the full pipeline — ingestion, warehouse, semantic layer, dashboards, and AI — with unlimited connectors and users. There's a free tier for lighter usage. Credit-based scaling means your actual cost depends on data volume and sync frequency, but the starting point is an order of magnitude lower than the stack.

The cost gap is real — roughly 3-7x at typical startup usage on tools alone, and significantly wider at heavy query volumes. But the larger difference is operational: the stack scenario also requires modeling time, pipeline debugging, schema change coordination across tools, and eventually observability tooling that the integrated approach eliminates entirely.

What could your data tell you?

Enter your domain and we’ll show you the business questions your tools can already answer — you just can’t ask them yet.

Try it with any company domain — no signup required.

What to Look For When You're Building the Recommendation

If you're the person writing the recommendation doc for your exec, here's the evaluation checklist that actually matters. Not "which tool has the best logo" — what will survive a pilot, scale past the first quarter, and not require a rebuild in eighteen months.

Connector coverage for your stack. "500+ connectors" is marketing. What matters: do they support your specific HubSpot instance, your Stripe configuration, your Postgres database? Ask any vendor for their connector page and check the sync options (full refresh vs. incremental, available tables, sync frequency). For example, here's what a connector page should show you.

SQL access. If a platform doesn't let you write SQL when drag-and-drop isn't enough, walk away. You will need it — for debugging, for complex joins, for the metric definition your CEO invents at 4pm on a Friday. This is non-negotiable for any technical evaluator.

Governed semantic layer. Define "revenue" once, use it everywhere — in dashboards, in AI queries, in exports. Without a semantic layer, every team member who writes a query reinvents the metric definition, and your numbers will never match across reports. This is the difference between analytics you trust and analytics you argue about.

Non-technical access. Can your CEO get answers without going through you? If every question routes through the one person who knows SQL, you've replaced one bottleneck (spreadsheets) with another (yourself). Look for drag-and-drop dashboards, natural language queries, and Slack integration.

Data export and lock-in. What happens to your data if you leave? Open standards matter here: platforms built on DuckDB and Parquet let you export your data in standard formats and move it to another system without a multi-month migration project. Proprietary formats mean you're locked in.

Scalability without re-platforming. Equally important: will this platform grow with you, or will you be running this evaluation again in eighteen months? Ask about the upgrade path — can you go from startup to enterprise without migrating data or rebuilding dashboards?

Actual limits, documented. Row counts, query timeouts, connector refresh rates. Not the marketing page — the docs. If a vendor can't tell you their actual limits, they either don't know or don't want you to know. Both are bad.

Support model for your size. What's the experience like for a startup paying $250-$500/mo, not an enterprise paying $50K? Check G2 reviews, ask in the demo, look at the support response time commitments. The vendor who treats you well at $500/mo is the vendor who'll earn your business at $5,000/mo.

Your Analytics Architecture Today Determines Whether AI Works Tomorrow

Your analytics architecture choice today determines whether AI agents can work with your data tomorrow. This isn't theoretical — it's already happening.

The problem with layering AI on top of a fragmented stack: only 16.7% of AI-generated answers are accurate on open-ended business questions without semantic governance. On enterprise databases with 1,000+ columns, accuracy drops to 6%. The AI isn't dumb — it's working with a system that has no consistent definitions, no governed metrics, and no way to verify its own answers.

A governed semantic layer changes this. When "revenue" has one definition, enforced across every query and every AI interaction, the AI starts from a foundation of trust. It doesn't have to guess what "monthly revenue" means or which of three conflicting calculation methods is correct.

Integrated platforms have a structural advantage here. When the semantic layer governs everything from ingestion to visualization to AI, the AI analyst doesn't need to guess what "monthly revenue" means — the definition is enforced system-wide. That's the difference between an AI that guesses and one that knows. Platforms that also expose governed data via open protocols (like MCP) let you work with your data from AI tools like Claude and Cursor without context-switching.

The industry recognizes this: Snowflake and Salesforce launched the Open Semantic Interchange in September 2025 to develop open standards for semantic data modeling. Semantic layers are becoming critical infrastructure, not a nice-to-have. The question is whether yours is built in from the start or bolted on after you've already accumulated years of ungoverned metrics.

The Tool Landscape, Organized by What You Actually Need

Rather than a wall of 30+ tools, here's the landscape organized by the three analytics categories that actually matter.

Product analytics

Track what users do inside your app. If product analytics is all you need, these tools are excellent — this guide is for when you need more.

| Tool | Best for | Pricing |

|---|---|---|

| PostHog | Open-source all-in-one (expanding into full data stack) | Free tier; usage-based |

| Mixpanel | Event tracking with strong funnels and cohort analysis | Free to $28+/mo |

| Amplitude | Enterprise behavioral analytics | Free to $49+/mo |

| Heap | Auto-capture (no manual event instrumentation) | Contact sales |

| Google Analytics 4 | Web traffic and marketing analytics (free) | Free |

Business analytics — stack components

Assemble these yourself if you have a data engineer and want maximum control. For a breakdown of what this actually costs: Data Stack Cost Calculator.

| Tool | Layer | Startup cost | Notes |

|---|---|---|---|

| Fivetran | Ingestion | ~$126-$234/mo | Announced merger with dbt Labs (Oct 2025) |

| Airbyte | Ingestion | Free (self-hosted); cloud varies | Open-source; more setup, lower cost |

| Snowflake | Warehouse | ~$312-$3,440/mo | Startup credits available. See our Snowflake comparison. |

| BigQuery | Warehouse | Free tier; usage-based | Good GCP fit. See our BigQuery comparison. |

| dbt | Transformation | Free (Core); $100/user/mo (Cloud) | Merging with Fivetran. Open-source Core remains free. |

| Metabase | BI | From $100/mo (Cloud) | Open-source option. See our Metabase comparison. |

| Looker Studio | BI | Free | Limited vs. paid tools. See our Looker Studio comparison. |

| Tableau | BI | $75+/user/mo | Enterprise BI. See our Tableau comparison. |

Business analytics — integrated platforms

Single platform for the full pipeline. Connect your sources and start building. This category is still maturing — here are the notable options:

| Tool | What's included | Best for | Pricing |

|---|---|---|---|

| Definite | Ingestion (500+ sources) + DuckDB warehouse + semantic layer + dashboards + AI analyst + MCP | Startups wanting full-stack analytics with governed metrics and AI | Free; $250/mo (Platform) |

| Mozart Data | Ingestion + Snowflake warehouse + basic transformations + BI | Teams that want managed Snowflake without the setup | From ~$1,000/mo |

| Panoply | Ingestion + warehouse + basic SQL analytics | Small teams needing a managed warehouse with ETL built in | From ~$500/mo |

| GoodData | Semantic layer + dashboards + embedded analytics | Teams focused on embedded analytics or customer-facing dashboards | Free tier; paid plans vary |

Each makes different tradeoffs. Definite emphasizes the governed semantic layer and AI agent across the full pipeline. Mozart Data gives you a managed Snowflake without the setup overhead. Panoply is warehouse-first. GoodData is strongest at embedded analytics. For a deeper comparison: The All-in-One Data Platform Buyer's Guide.

FAQ

Do I need a data warehouse if I just want dashboards from my SaaS tools?

Not necessarily. If you're connecting 3-5 SaaS tools and building standard dashboards (MRR, pipeline, marketing attribution), an integrated platform handles ingestion, storage, and visualization without you setting up a separate warehouse. A standalone warehouse makes sense when you have complex transformation requirements, very large data volumes, or a data engineering team to manage it. For most startups, the warehouse is infrastructure overhead you don't need to own directly.

What's the real cost of a data stack for a startup?

For a 50-person B2B SaaS company with 3-5 data sources, the tools alone (Fivetran + Snowflake + dbt Cloud + Metabase) run $838-$4,464/mo depending on data volume and query intensity. Most of the variance comes from Snowflake compute costs. This doesn't include setup time, maintenance, or the eventual need for observability tooling. Run your specific scenario through the Data Stack Cost Calculator.

Can I start with an integrated platform and switch to a stack later?

Yes — if the platform uses open standards. Platforms built on DuckDB and Parquet store your data in formats any warehouse can read. If you outgrow the platform (or your needs become too specialized), you export your data and models and rebuild on your own infrastructure. The key question to ask: "In what format can I export my data, and how long would a migration take?" If the answer is proprietary formats and a multi-month project, you're locked in.

How do I evaluate analytics tools if I don't have a data engineering background?

Focus on the checklist above: connector coverage for your specific tools, SQL access, semantic layer, non-technical access for your team, and the export story. Run a pilot with real data — not sample data. Connect your actual HubSpot, your actual Stripe, and try to answer a question your CEO asked last week. If you can get from zero to a useful dashboard in a week, the tool is probably viable. If you're still configuring connectors after a week, it's not.

Is AI analytics worth it for early-stage startups?

Worth it if the AI operates on governed data. An AI analyst that runs on consistent metric definitions can genuinely save hours of manual reporting. An AI that queries raw, ungoverned data will give you confident-sounding wrong answers — which is worse than no answer at all.

The bar: does the AI have access to a semantic layer that enforces metric definitions? If yes, it's a real productivity gain. If it's just an LLM chatting with your raw database, the accuracy numbers are brutal.

Ready to skip the assembly? Start with Definite for free — connect your data sources and see your first dashboard in minutes, not months.