You Don't Need Snowflake + Fivetran + dbt + Looker. Here's the Evidence.

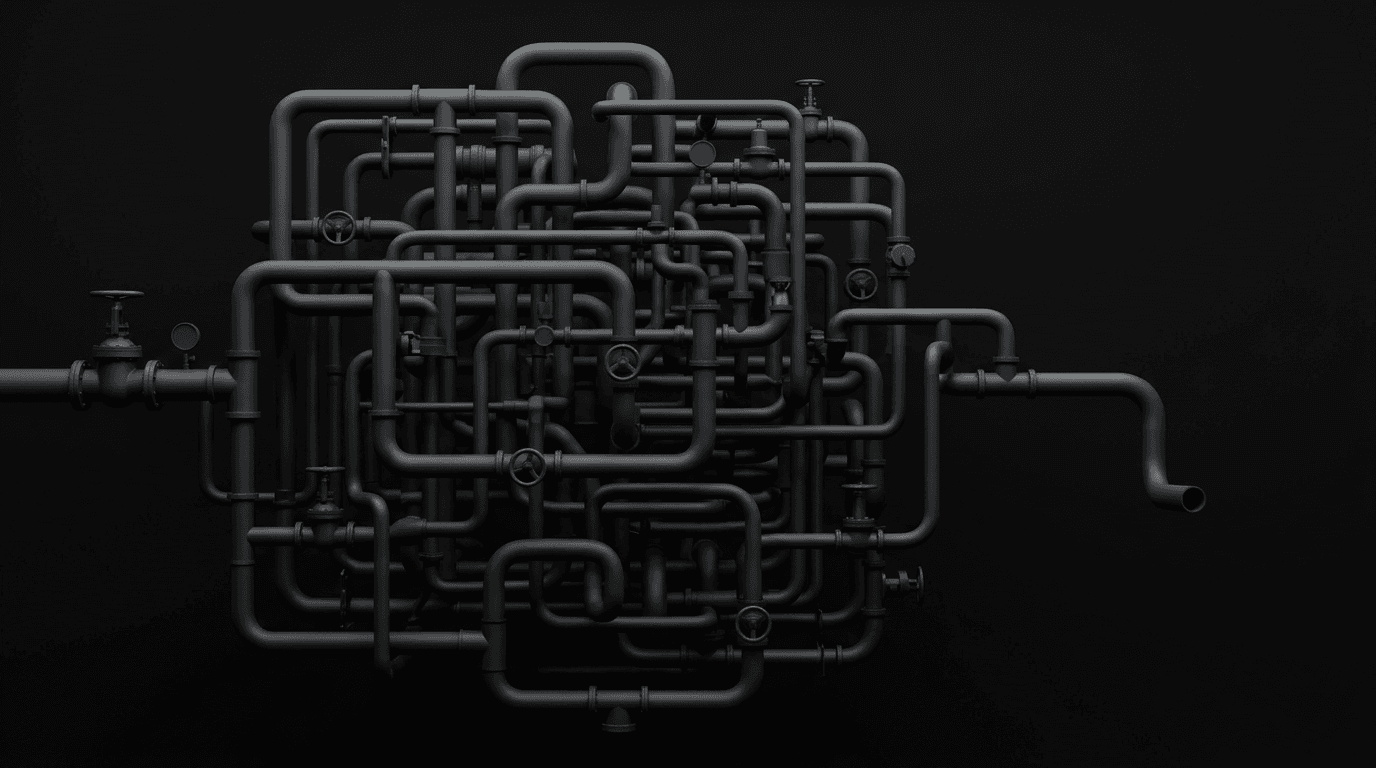

You've been evaluating data tools for weeks. Maybe months. You've done the Snowflake demo. You've had the Fivetran call. Someone mentioned dbt, and now you're reading about transformation layers at 11 PM on a Tuesday. Every vendor you talk to assumes you already have the other pieces in place. Fivetran asks where you want to load the data — but you haven't picked a warehouse yet. Snowflake asks how you're transforming data — but you don't have anyone who can run dbt. You just wanted answers. Now you're assembling a Rube Goldberg machine.

You're not alone. We talk to companies in this exact situation every week — startups and growth-stage businesses trying to stand up analytics for the first time, or companies that already built the stack and are living with the consequences. After years of these conversations, a clear pattern has emerged: every modern data stack build that goes sideways follows one of three failure modes.

These aren't hypotheticals. They're from real conversations with real businesses — customers and prospects who told us exactly what happened when they tried to build the "best practice" data stack everyone told them they needed.

If you're short on time: The modern data stack was designed for companies with dedicated data engineering teams. If you don't have one, assembling 3–5 separate tools creates a coordination problem you're not equipped to handle. The alternative: consolidated platforms that combine ingestion, warehousing, governed metrics, and AI in one system — answers in days, not months.

Every Failed Data Stack Build Follows the Same Shape

Every company that reaches out to us has a data story. Sometimes it's "we have nothing and the board is asking questions we can't answer." Sometimes it's "we built the whole stack and now we spend more time maintaining it than using it." But the shape of the problem is remarkably consistent.

The modern data stack — the Snowflake + Fivetran + dbt + Looker assembly, or some variation of it — was designed for companies with dedicated data engineering teams. Companies like Netflix, Airbnb, and GitLab popularized the pattern. They had the people, the budget, and the organizational maturity to make it work.

Most companies evaluating these tools for the first time don't have any of that. They have a CEO who needs board metrics, a VP who came from a larger company and said "we need a data stack," and maybe one stretched analyst who also does RevOps. What follows is predictably painful.

You Never Finished Building It

This is the most common failure mode we see, and the most demoralizing.

A fintech CEO we spoke with signed an annual contract with a major cloud data warehouse and purchased a managed ETL tool. His team had seven data sources to connect — a MongoDB production database, two payment processors, and a handful of SaaS tools. Six weeks in, they'd gotten three sources connected, but the schemas didn't match what they expected. The ETL tool was syncing raw data that nobody knew how to query. After a month and a half, the CEO still couldn't answer the question his investors were asking: what's our actual delinquency rate? He didn't start a company to become a data infrastructure shop. He needed a number for his next fundraise. Instead, he was in a Slack thread with his data engineer debating MongoDB field mappings.

He's not an outlier. A data consultant we work with described a client — a mid-size B2B marketplace — that started a build with BigQuery, Power BI, and Fivetran. After a full month, data was flowing into BigQuery, but nobody had built models on top of it. The raw tables were there. The dashboards weren't. The gap between "data is in the warehouse" and "someone can answer a question" turned out to be the hardest part — and the part nobody had budgeted time for.

The cold-start problem nobody talks about

Each tool in the stack assumes the other pieces already work:

- Your ETL tool asks "where should I load data?" — assumes you have a warehouse configured

- Your warehouse asks "what transformations do you need?" — assumes you have a data engineer running dbt

- Your BI tool asks "where are your modeled tables?" — assumes someone has already cleaned and structured the data

- Your semantic layer asks "what are your metric definitions?" — assumes someone knows the answer

Nobody sells the assembly. You buy the parts, and the integration work — the hardest part — is left to you. For a company without a data engineer, that's where the project dies.

Both of these companies eventually switched to a consolidated platform and had working dashboards within days — not because the technology was magic, but because there was nothing to assemble.

And the cost isn't just the money you spent on tool contracts. It's the months you went without answers. While you were debugging Airbyte connections, your board was asking about churn and you were still pulling numbers from a Google Sheet.

You Built It, But Can't Maintain It

The second failure mode is sneakier. The build succeeds. Data flows. Dashboards exist. And then — slowly — the system starts to rot.

A head of data at a market research company described his situation to us: he'd inherited a stack of Metabase, dbt, Redshift, and Tableau. Four vendors, four sets of credentials, four things that could break independently. His Tableau dashboards felt like stepping back in time. His dbt project had 200+ models and nobody could tell you which ones were still accurate. And the AI-analytics tool they'd layered on top was hallucinating metrics because the underlying definitions were inconsistent. He dropped all four tools. When he demoed the consolidated replacement to his COO and head of product, one of them described it as a jaw-dropping moment — not because the technology was flashy, but because they'd assumed that level of clarity simply wasn't possible with their data.

At a SaaS company running five tools — a CDP, managed ETL, cloud warehouse, BI tool, and product analytics platform — every new hire needed a separate onboarding call just to understand what connected to what. The Head of CS, three months into his role, admitted he was still "blind" to retention metrics because no single tool could show him a cohort view that matched the CFO's definition of churn. The VP of Ops was building monthly board reports in Excel because pulling the same numbers from the BI tool took longer and gave different results depending on who ran the query. They eventually consolidated — and the next batch of hires were productive within a day.

Maintenance compounds — it doesn't scale

Maintenance doesn't scale linearly with tool count — it compounds. Our cost analysis found that moving from 6 to 11 data connectors adds roughly 40% more coordination and reconciliation burden. And by the time you hit Stage 2 growth (around 150 employees), the second data hire "spends 40% of their time maintaining what the first person built — not creating new value."

This is the story of every data team described as what one Reddit user called "butter scraped over too much bread."

The maintenance surface area grows in specific, predictable ways:

- Connector failures. Your ETL sync breaks on a Saturday night because a source API changed. Your transformation models fail downstream. Your dashboards show stale data. Monday morning, your exec team is looking at numbers from last week and nobody knows why.

- Semantic drift. "Revenue" means one thing in the warehouse, another in the BI tool, a third in the CFO's spreadsheet. The more tools in the stack, the more places metric definitions can diverge.

- The bloated middle. Data models that started simple become convoluted and poorly optimized over time. Nobody remembers why certain transformations exist. Nobody wants to touch them. The data engineer becomes a full-time janitor.

Meanwhile, the people costs compound faster than the technology costs. Between Stage 1 and Stage 2 of a typical B2B SaaS company, people costs scale 9.5x while technology costs only scale 1.6x. The tools are the cheap part. The humans keeping them alive are the real expense.

It Works, But Costs More Than the Problem It Solves

The third failure mode is the one nobody warns you about, because from the outside it looks like success.

The CEO of a multi-brand e-commerce company told us his team had been evaluating Snowflake and Databricks. They wanted the full architecture — bronze, silver, and gold data layers, with a proper semantic layer on top. They had 11 Shopify stores, Amazon Seller Central across multiple countries, Facebook Ads, Google Ads, and NetSuite. Their technical lead had spec'd out the whole system: warehouse, ETL, transformation layer, BI, plus the orchestration to keep it all running. When they tallied the first-year cost — not just the tools, but two data engineers, a three-month implementation timeline, and ongoing maintenance — the number exceeded what they were spending on their entire marketing stack. They chose a completely different path and had dashboards across all 11 stores within weeks.

Another company — a SaaS business with about 200 employees — actually finished the build. They had a cloud warehouse, managed ETL, dbt for transformations, and an orchestration layer. It all worked. But their warehouse vendor had moved them to Enterprise-tier pricing with time-travel surcharges they hadn't anticipated. Their ETL costs had doubled after a billing model change. And the three-person data team spent most of their week keeping the pipes running, not building anything new. They weren't looking to rip it out — but they were quietly asking whether the math still made sense.

The hidden math of tool cost stacking

Tool costs compound in ways that aren't obvious at the start.

ETL pricing is especially volatile. When Fivetran changed its billing model in 2025, most teams saw costs increase 40–70%. Some reported bills that doubled or quadrupled. High-volume deployments can hit $15,000 to $30,000 per month — just for the connector layer.

But the tools are almost a rounding error compared to the people. A fully loaded, US-based data engineer costs $150,000 to $250,000 per year. And you probably need more than one. At a 300-person company, a data team of "two data engineers and one team lead" was described as "the smallest team I've worked for."

When you add it up — warehouse compute, ETL licensing, BI seats, transformation tooling, orchestration, and the team to run it — most companies are looking at $100,000+ per year before they've answered a single business question. And that number grows faster than the company does.

Estimate your real data stack cost →

Why This Keeps Happening

If the pattern is so predictable, why do companies keep making the same bet?

Because the modern data stack is still presented as best practice. Every blog post, conference talk, and advisor recommendation reinforces the same architecture. Snowflake + Fivetran + dbt + Looker. It's the "serious company" choice. Deviating from it feels like cutting corners. One CEO we spoke with described the social pressure directly: his VP of Marketing came from a company with a five-person data team and a $2M data budget. When she said "we need a data stack," she was picturing what she had before — not what's realistic at their scale.

Because each vendor optimizes for their slice, not the whole. Snowflake builds the best warehouse they can. Fivetran builds the best connectors they can. dbt builds the best transformation tool they can. Nobody is incentivized to tell you that assembling these pieces is the hardest part — or that you might not need to. Even vendors are starting to recognize this — the Fivetran-dbt merger is a step toward consolidation. But bolting two tools together is not the same as building one system.

Because even the budget version has the same structural problem. You might be thinking: what about BigQuery + Airbyte + dbt + Metabase? Or the fully open-source path — DuckDB, dbt-core, and an open-source BI tool? The tool costs are lower, sometimes dramatically so. But the assembly problem is identical. You still need someone to connect the pieces, maintain the integrations, and govern the definitions. The failure modes above aren't caused by Snowflake's pricing — they're caused by the architecture of assembling separate tools into a system.

Because the architecture makes AI significantly harder to deploy reliably. When your data lives in four tools with four schemas and no shared way to define what "revenue" or "churn" actually means, AI will guess at definitions and get them wrong. More than 80% of AI projects fail — and while the causes are broad, fragmented data infrastructure is a consistent contributor. An AI that can only read your data — but can't build models, create dashboards, or act on insights — is a commentator, not an operator.

What to Do Instead

The alternative isn't "don't do analytics." It's stop assembling.

The reason these failure modes exist isn't that the individual tools are bad — Snowflake, Fivetran, and dbt are excellent products for the teams they were built for. The problem is that most companies end up assembling them without the team to operate the assembly. It's like buying professional kitchen equipment and expecting it to cook dinner by itself.

A consolidated platform combines the layers you'd otherwise assemble — ingestion, warehousing, a semantic layer (a shared set of metric definitions that governs every query and dashboard), analytics, and AI — into one system. You connect your data sources, define your metrics once, and start asking questions. The connectors don't break because they aren't integrating across vendors. The metrics don't drift because there's one definition, not three.

Here's what the timeline actually looks like:

- Day 1: Connect your core data sources — Stripe, HubSpot, your product database. OAuth or API key. Minutes per source.

- Day 2-3: Define your key metrics in the semantic layer — what "revenue" means, how you calculate churn, what counts as an active user. This is the work that matters, and it only happens once.

- Day 3-5: Your first executive dashboard is live. Not a prototype — a working dashboard with real data that updates automatically.

- Week 2: Your team is asking the AI assistant questions in plain English and getting trustworthy answers, because every query runs through the same governed definitions.

This is what we built with Definite. It's not the right choice for every company — but it's built specifically for the companies that keep hitting the failure modes above.

One Series A SaaS team — previously running a cloud warehouse, managed ETL, and a BI tool — cut their analytics spend from $2,400/month to $250/month after consolidating onto a single platform. More importantly, they went from months of setup to working dashboards in days. The e-commerce company from our third story? They skipped the Snowflake + Databricks build entirely and had dashboards across all 11 stores within weeks.

"But what if I outgrow it?"

This is the question we hear most often — and it's the right question to ask. The fear isn't that a simpler approach won't work today. It's that you'll pick it, outgrow it in 18 months, and have to rip it out and start over.

Here's the honest answer: if your company grows to 500+ employees with a dedicated team of data engineers running custom ML pipelines, you may eventually need the flexibility of an assembled stack. That's a real scenario.

But two things are true:

- Starting with a consolidated platform doesn't lock you in. Definite is built on open standards — your data lives in portable formats, your metric definitions export cleanly, and nothing is trapped in a proprietary system. If you outgrow the platform, your models, metrics, and data move with you.

- Most companies never reach the scale where the assembled stack is the right answer. The "what if I outgrow it" fear is real, but it's usually a hedge against a scenario that doesn't arrive. Meanwhile, the cost of starting with the assembled stack right now — in months delayed, dollars spent, and opportunities missed — is concrete and immediate.

The safer bet is usually: get answers today with something that works, and evolve when (and if) the needs change.

What you give up

Honest tradeoffs of the consolidated approach:

- Less flexibility for custom data engineering workflows. If you need bespoke Python transformations, complex orchestration graphs, or custom ML model training against your warehouse, a consolidated platform won't replace a full engineering stack.

- You're depending on one vendor. If that vendor's roadmap diverges from your needs, switching costs are real — even with portable data. This is a tradeoff, not a dealbreaker, but you should know it going in.

- You won't impress a data engineering hire. If you're recruiting senior data engineers, they may prefer the assembled stack because it's what they know. The consolidated platform is optimized for teams without dedicated data infrastructure people — which is a strength and a limitation.

See what your current stack looks like →

How to Know Which Path You're On

| Failure mode | Warning signs |

|---|---|

| You'll never finish | No data engineer on staff. In evaluation mode for 1+ months. Every demo raises questions about what else you need. Board needs metrics in weeks, not quarters. |

| You'll build it but can't maintain it | Data person spends more time on infrastructure than analysis. New hires can't use the stack without a walkthrough. Multiple tools give different answers to the same question. |

| It'll cost more than it's worth | More tool contracts than people who use them. Surprised by a vendor pricing change in the last year. Data engineer spends majority of time on maintenance. |

When the assembled stack IS the right choice

The assembled stack works when you have the team it was designed for:

- 3+ dedicated data engineers who own integration and maintenance

- Custom ML/data science workloads requiring direct warehouse access

- Enterprise scale (500+ employees) with compliance requirements

- A working stack you're happy with — don't rip it out because a blog post told you to

"My board says we need Snowflake"

If you need to make the case internally for a different approach: frame it as a timeline and ROI question, not a technology debate. The assembled stack takes 3–6 months and requires a dedicated data engineer to operate. A consolidated platform delivers answers in days without the coordination overhead. Ask: "Would you rather have answers next month, or infrastructure next quarter?" You're not arguing against Snowflake — you're showing that for your company, at your stage, the fastest path to trustworthy answers doesn't require assembling four tools and hiring a data engineer.

If this sounds like your situation, Definite was built for it. Start free →