Data Stack vs Data Platform: Why AI Forces the Choice

If you've been asked to "figure out the data situation," you're weighing two paths: assemble a stack (Snowflake + Fivetran + dbt + Looker) or adopt a platform that does it all. The difference between these options used to be semantic — two labels for roughly the same thing. You assemble tools, you get analytics. Either way, you end up with dashboards.

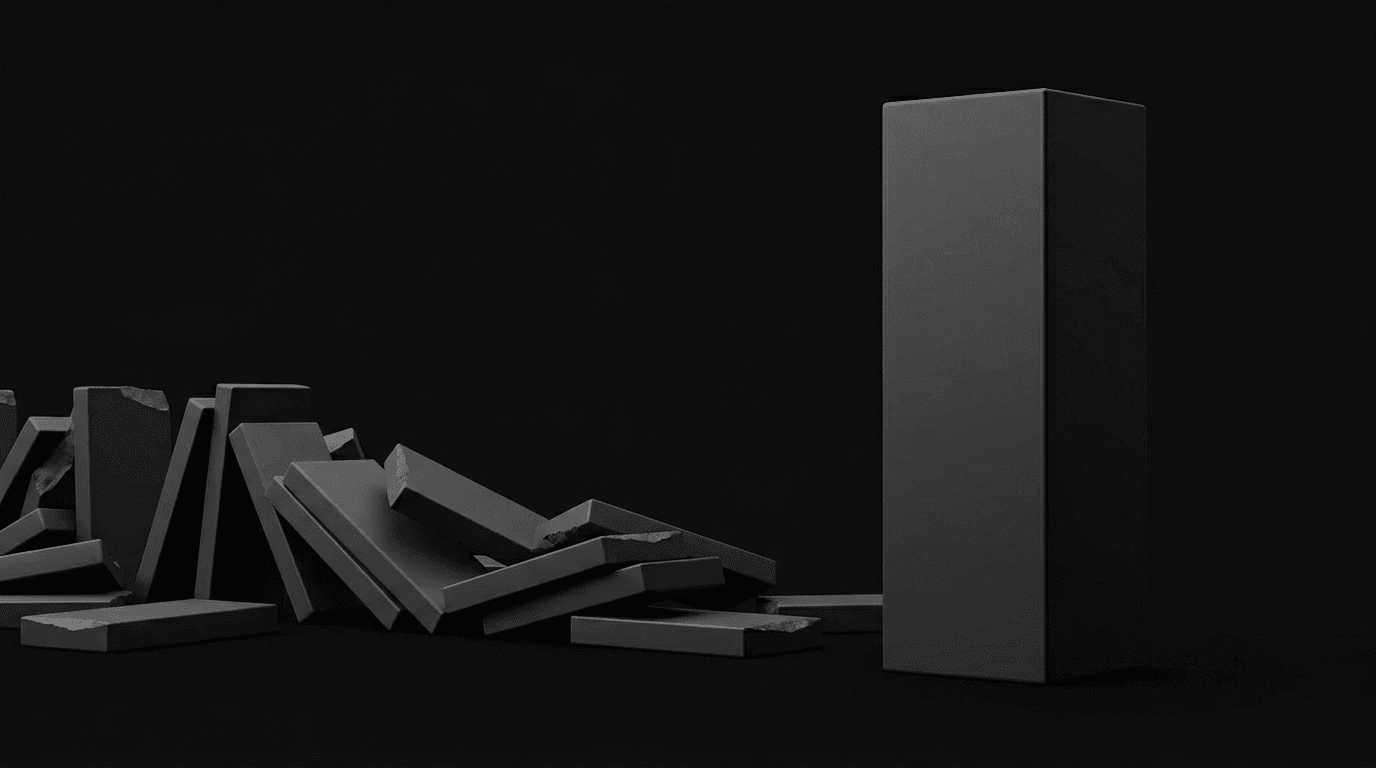

That's no longer true. AI changed the equation — not because AI is magic, but because AI agents need something stacks structurally don't provide: a single, governed system they can read from, write to, and act across. If your analytics foundation is four tools duct-taped together, AI can query it. It cannot build on it. That distinction — query vs. build — is the one that matters now.

We wrote The Modern Data Stack is Dead to argue the stack era is ending. We wrote What Is a Data Platform? to define the alternative. If you're still weighing whether to buy the four-vendor bundle, read our evidence-based breakdown of why Snowflake + Fivetran + dbt + Looker projects stall. This is the decision post — the one that helps you choose.

A note on scope: This framework applies to companies under ~200 employees with one or two people dedicated to data and analytics — the teams where the choice between stack and platform has the highest stakes and the most lopsided tradeoffs. If you have a 7-person data engineering team, the calculus is different.

What we mean (and don't mean) by each term

-

A data stack is a set of separate tools you assemble: a warehouse (Snowflake, BigQuery, Redshift), an ingestion layer (Fivetran, Airbyte), a transformation layer (dbt), and a BI tool (Looker, Metabase, Tableau). You pick best-of-breed for each layer and connect them. The promise is flexibility. The cost is coordination. (The same argument applies whether you're running the expensive stack or the lightweight version — Postgres + Airbyte + dbt Core + Metabase. The coordination tax scales with the number of tools, not the price tag. If the warehouse line item is the one you're questioning first, our stage-by-stage BigQuery alternatives breakdown walks through when to optimize, swap, or replace.)

-

A data platform is an integrated system where ingestion, modeling, analytics, and AI share a single foundation — one product, one semantic layer, one context for AI to operate in. You don't assemble it. You set it up. (For the full definition, see What Is a Data Platform?)

Google treats these as near-synonyms. They're not — at least not anymore. The difference used to be academic: both paths got you dashboards. Both could answer last quarter's revenue question. But the moment you need AI that does more than generate SQL — AI that builds models, updates dashboards, modifies pipelines, and acts on governed metrics — the architectural gap becomes a chasm.

The maintenance reality nobody talks about

Before the structural argument, here's the one you'll feel every week — especially if you're the one person maintaining whatever gets chosen.

According to our B2B SaaS Data Stack Cost Guide, a full data stack at a 50-person company requires roughly a quarter of a full-time person in ongoing maintenance. That's a quarter of your role spent on connector monitoring, dbt model updates, pipeline debugging, dashboard fixes, and access management. Not analysis. Not the dashboards your CEO asked for. Maintenance.

What does that look like week to week? Monday morning, a Fivetran sync failed overnight because HubSpot changed an API field — you debug it. Tuesday, someone asks why the revenue number in Looker doesn't match the Snowflake query — you trace it to a dbt model that wasn't updated after a schema change. Wednesday, your CEO wants a new dashboard by Friday — but you're still fixing Tuesday's issue. Thursday, you're fielding Slack messages about dashboard access permissions. Friday, you're doing the analysis you were actually hired to do. That's the stack tax — and it compounds as your data sources grow.

And the scaling curve is brutal. The same data shows that when companies grow from 50 to 150 employees, tech costs increase 1.6x — but people costs increase 9.5x. The stack doesn't just get more expensive. It gets exponentially more labor-intensive. That's the compounding maintenance tax most stack-vs-platform comparisons ignore.

One fintech founder described the experience after signing with Snowflake: "After spending almost a month and a half doing a lot of this work, we're still not able to write queries to get the right information." Six weeks of setup, still no answers. That's not a bad tool — it's a bad architecture for a team that size.

Why AI makes the maintenance problem worse, not better

You might think AI is the solution to the maintenance burden — just point a chatbot at your stack and let it help. That works for simple queries. But the moment you want AI that actually operates your analytics — building dashboards, modifying models, acting on governed metrics — the fragmentation of a stack becomes a structural problem, not just an inconvenience.

Here's a concrete example: your CEO asks for a revenue-by-channel breakdown. In a platform, the AI agent builds it in minutes — one system, one semantic layer, one set of governed metric definitions. In a stack, you're writing a dbt model, updating a Looker explore, debugging a Fivetran sync to make sure the marketing data is current, and cross-referencing metric definitions across three tools. That's Wednesday gone.

AI agents need three things from your data layer, and stacks don't provide them without significant assembly and ongoing coordination.

AI needs one definition of revenue — stacks give it four

AI needs shared metric definitions — one canonical definition of "revenue," "churn," "active user" — that every query, dashboard, and automation uses. In a stack, semantic consistency is possible (dbt's MetricFlow, Looker's LookML), but it lives in one tool and has to be synchronized across four others. Metrics defined in dbt don't automatically govern what Looker displays or what your AI assistant answers. Every tool has its own version of the truth, and keeping them aligned is manual, fragile work.

In a platform with a built-in semantic layer, the definitions are centralized. Every query — human or AI — runs through the same governed layer. There's one "revenue." Not four.

AI that can only read is an assistant — you need an operator

Most AI analytics tools today are read-only: they can query your warehouse and summarize what they find. That's useful, but it's a ceiling. An AI agent that can only read is an AI assistant. An AI agent that can write — build a new dashboard, modify a data model, create a pipeline, update a metric definition — is an AI operator.

Stacks make write access fragmented by design. Your AI would need API access to Snowflake, Fivetran, dbt, and Looker separately, with different authentication, different schemas, and different side effects.

The obvious counter: just point a coding agent at all four tools. Give it MCP servers, API keys, CLI access to each layer. Claude Code, Cursor, Windsurf — they can all hold multiple tool connections at once. Technically, your AI can now write to everything.

But connecting an AI to four tools is not the same as giving it one system to operate in:

- No cross-system dependency graph. The AI doesn't know which Looker dashboards break when it modifies a dbt model, or which downstream pipelines depend on the table it just altered. Each tool is a silo. The AI is guessing at the connections between them.

- No transactional safety across tools. If the AI updates a dbt model, refreshes a Snowflake view, and the Looker deployment fails halfway, you're in an inconsistent state across three systems with no unified rollback.

- Four ontologies instead of one. "Revenue" is a metric in dbt, a measure in Looker, a column in Snowflake, and doesn't exist in Fivetran at all. The AI has to translate between four different representations of the same concept — and gets it wrong at the edges, where definitions diverge silently.

- Glue is not governance. An AI with four API keys can write anywhere, but nothing enforces that its writes are consistent. There's no shared semantic layer validating that the dashboard it just built in Looker actually matches the metric definition in dbt. You've given the AI surface area without structure — it can touch everything and govern nothing.

A platform gives AI one system to operate in, with governed write access across all layers. The write operations are inherently consistent because there's one semantic layer, one dependency graph, and one set of guardrails — not four disconnected tools held together by an agent's context window.

AI-as-SQL-generator works fine on a stack. AI-as-agent — building, modifying, and acting across the system — does not. If all you need is a chatbot that writes queries, the stack is fine. If you want AI that operates your analytics, you need integration the stack can't easily provide.

When a stack still makes sense

We sell a platform. Here's where we genuinely think a stack is the better choice:

- You have a dedicated data engineering team (3+ people). The coordination cost that crushes a solo data person is manageable when you have specialists for each layer. A data engineer maintaining dbt, a platform engineer managing Snowflake, an analytics engineer building Looker dashboards — the stack's modularity becomes an advantage, not a tax.

- Your data volumes require specialized compute. Petabyte-scale workloads, sub-second query latency requirements, or highly custom ML pipelines — these edge cases genuinely benefit from dedicated infrastructure you can tune at each layer.

- You need deep customization of every layer. Some industries (healthcare data, financial compliance, regulated ML) require fine-grained control over exactly how data moves, transforms, and is accessed at each stage. A platform optimizes for the 90% case; a stack lets you control the 100%.

- You're already running a stack that works. Don't rip something out because a blog post told you to. If your current stack is delivering answers, your team can maintain it, and the migration cost exceeds the maintenance cost — keep it. Most companies rebuild their stack every 2-3 years anyway; evaluate a platform at the next natural transition point.

- The middle path works for you. A lightweight stack — Postgres as your warehouse, Metabase for BI, dbt Core for modeling — is cheap and simple enough for many small teams. If this covers your needs and you're not pushing toward AI-driven workflows, it's a legitimate option.

When a platform is the obvious choice

A platform fits when the stack's coordination costs exceed its modularity benefits — which, for most growing companies with small data teams, happens almost immediately:

- Your CEO asked for dashboards three weeks ago and you're still debugging the pipeline. The typical stack implementation takes 10+ weeks. A platform can have you running dashboards in a day — not because it's simpler, but because the integration work is already done.

- You're the sole data person (or one of two). A quarter of your time going to infrastructure instead of analysis is not sustainable. A platform collapses that maintenance burden to near zero.

- Leadership is asking for AI analytics and your stack can't support it. Natural language queries, automated insights, data agents — these require a foundation AI can operate on. Bolting AI onto a fragmented stack produces what one customer called "the AI promise collapsed on Metabase." The model isn't the problem. The foundation is.

- You're managing four vendor contracts and wondering why. Four renewal cycles, four pricing models, four integration points. A platform is one bill, one relationship, one system. If it doesn't work out, your data should be exportable in open formats (Parquet, Iceberg). Look for that before you commit.

- You're building for AI from day one. Starting with an integrated platform avoids a painful migration later. Retrofitting AI onto a stack is possible. Retrofitting a governed semantic layer across four tools is not.

What to look for in a data platform

If you go the platform route, here's the evaluation rubric — not a product pitch, but the criteria that matter to the person who has to live with the choice. (For the full buyer's guide, see our Data Platform Buyer's Guide.)

Must-haves:

- Full SQL access. If you can't write SQL — CTEs, window functions, raw queries — the platform is a toy. Period. You need the escape hatch for edge cases a drag-and-drop builder can't handle.

- Real connectors for your specific sources. "500+ connectors" is marketing. What matters is whether it connects to your HubSpot, your Stripe, your Salesforce, your Postgres. Verify each one during evaluation — not on a features page, in an actual trial with your data.

- A semantic layer with governed metrics. One definition of "revenue" that every query uses. Without this, you're back to metric drift — just inside one tool instead of four.

- Data portability in open formats. Your data should be yours — not trapped behind a proprietary storage layer. Look for platforms built on open standards like DuckLake (DuckDB + Iceberg), Parquet, or native Iceberg support. If the underlying storage format is open, you can walk away with your data intact. Ask specifically what format your data lives in, not just what you can export.

- AI that builds and acts, not just queries. If the AI can only ask questions about your data, it's a chatbot, not an agent. Look for AI that can create dashboards, modify models, and trigger automations — across the full system, not just one layer.

Strong signals:

- Embedded Python execution (for custom logic without external tools)

- API and MCP access (so AI agents in tools you already use — Claude, Cursor — can query and act on your governed data without context-switching)

- Credit-based pricing with a free tier (test with real data before committing)

Red flag:

- Any platform that requires you to also buy a separate warehouse, ETL tool, or BI layer is a platform in name only. It's a stack component with better marketing.

The fork in practice: stack vs platform side-by-side

| Dimension | Data Stack | Data Platform |

|---|---|---|

| What it is | 4+ separate tools assembled and integrated by you | One integrated system — ingestion, modeling, analytics, AI |

| Setup time | 10+ weeks typical; 6 weeks to first query at best | Days to first dashboard; connectors + semantic layer from day one |

| Ongoing maintenance | ~A quarter of a full-time role | Near zero — the platform handles infrastructure |

| AI readiness | Read-only by default; agentic AI requires assembling semantic consistency across tools | AI-native: agents read, write, and act across the entire system |

| Semantic consistency | Possible but manual — requires dbt + BI alignment | Built-in: one semantic layer governs all queries |

| Who can use it | Data engineers and SQL-fluent analysts | Anyone — SQL when you need it, drag-and-drop when you don't |

| Data portability | Each tool has its own export; warehouse data is yours | Open storage (DuckLake, Parquet, Iceberg) — your data, your format |

| Scaling path | Add tools, add people, add coordination | Same platform grows with you; no re-architecture needed |

| Rebuild cycle | Most companies rebuild every 2-3 years | No rebuild — same system from startup to scale |

| Annual tool cost (50 employees) | $15,000–$38,000/year across 4+ vendor contracts | One bill: starts at $250/mo (overages at heavy usage) |

| Total cost with people | $62,000–$85,000/year including maintenance time | Platform cost + your time goes to analysis, not ops |

| Where it breaks down | Solo data person drowning in maintenance | Teams that need deep, per-layer customization at petabyte scale |

Note on cost: We're showing tool costs separately from people costs because conflating them is misleading. The tool cost of a stack is not the scary number — the people cost is. That 9.5x multiplier from 50 to 150 employees is the hidden tax. (Want to run the math for your specific stack? Try the data stack cost calculator.)

FAQ

Can I migrate from a stack to a platform later?

Yes. The migration path depends on what you have: if your data is in Snowflake or BigQuery, a platform can connect to it directly as a source during transition. The harder part is migrating your semantic definitions (dbt models → platform semantic layer), dashboards, and automations. Budget 1-2 weeks for a thoughtful migration, not a forklift. Look for a platform that can import from your existing warehouse rather than requiring you to re-ingest everything.

What if I outgrow the platform?

"Outgrow" usually means one of three things: data volume (billions of rows), query complexity (sub-second latency requirements), or team size (you need more customization per layer). Most platforms handle the first two up to the scale of a 200-300 person company. For team size, the question is whether the platform supports enough extensibility (SQL, Python, API) to let a growing team work without fighting the tool. If you hit genuine enterprise scale and need per-layer control, a platform built on open storage like DuckLake (DuckDB + Iceberg) makes the transition far less painful than migrating off a proprietary format — your data is already in open standards.

Is a data platform just a BI tool with extra features?

No. This is the most common misconception. BI tools visualize data that already exists in a functioning system — they assume someone else has handled ingestion, transformation, and governance. A data platform replaces that entire system, including the BI layer. Visualization is an output, not the product. If you're evaluating a "platform" that requires you to also buy a warehouse, it's a BI tool in a bigger box.

Do I still need dbt with a data platform?

Depends on the platform. If it has a built-in semantic layer with governed metrics — where you define "revenue" once and every query, dashboard, and AI output uses that definition — dbt's modeling role is handled natively. You might still want dbt for complex transformations during the transition, but the end state is a platform where the semantic layer replaces what dbt was doing for you. If the platform doesn't have a semantic layer, you still need dbt, and you should question whether it's really a platform.

What about Databricks? Isn't that a "platform" too?

Fair question. Databricks calls itself a platform, and it is one — for data engineers. It's built for teams that want deep control over every layer: Spark pipelines, Delta Lake, MLflow, Unity Catalog. If you have a data engineering team, it's powerful. If you're a solo RevOps lead trying to get dashboards to your CEO by Friday, Databricks is overkill. The distinction is analyst-first platform (what this post is about) vs. engineer-first platform. Both are platforms. They're built for different people.

If you're at the decision point — stack or platform — the fastest way to answer the question is to try both paths. But if you'd rather skip the 10-week stack implementation and see what a platform can do with your actual data, try Definite free. Connect your sources, write SQL against governed metrics, and test the AI agent — in under an hour, not under a quarter.