Can PostHog Work as Your Data Warehouse?

You already have PostHog. It's been solid for product analytics — funnels, retention, session replays. But now your CEO wants revenue trends. Finance wants churn numbers. Ops wants pipeline metrics. So you're asking whether PostHog can work as your data warehouse — one place for events and those business numbers — or whether you need something else. Maybe you're picturing connecting your production database and calling it done.

It's a reasonable question. PostHog added a data warehouse feature built on DuckDB, and it lets you link external sources alongside your event data. If it works, you avoid building the full Snowflake + Fivetran + Looker stack that Reddit keeps recommending. If it doesn't, you've wasted a few weeks and you're back to square one.

PostHog's warehouse works for some of this. It doesn't work for all of it. And the gap between the two is wider than PostHog's marketing page suggests — something PostHog themselves quietly acknowledge.

In short:

- PostHog's warehouse handles product analytics and basic source linking well — don't over-engineer what it can handle

- It hits a structural ceiling for business analytics: no semantic layer, limited data modeling, and BI that PostHog themselves call "not quite ready"

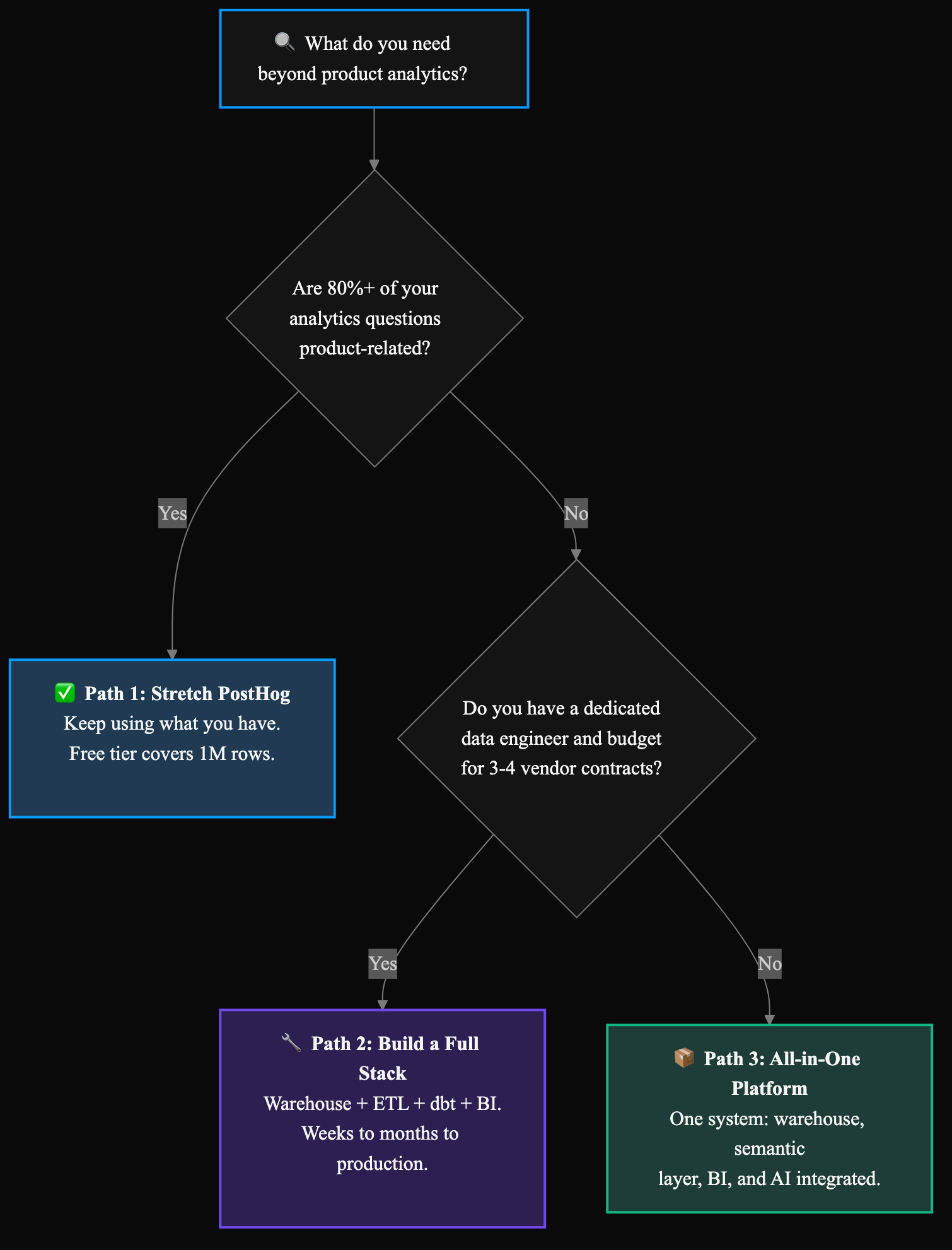

- You have three realistic paths: stretch PostHog, build a full data stack, or use an all-in-one data platform

What PostHog's data warehouse actually does

PostHog's warehouse isn't a bolt-on feature — it's a genuine attempt to become a data platform. Here's what it offers as of early 2026:

Data ingestion: 36 managed sources including Stripe, HubSpot, Salesforce, Shopify, Postgres, MySQL, MongoDB, BigQuery, Snowflake, and Redshift. You can also bring data from S3, Google Cloud Storage, Azure Blob, and Cloudflare R2. For a product analytics tool, that's a meaningful list.

Query engine: SQL access to query across PostHog events and linked external data in one place. You can join your Stripe billing data with your product usage events, which is genuinely useful.

Dashboards: Built-in visualization and dashboarding for both product and warehouse data.

Architecture: The managed DuckDB warehouse is PostHog's next-generation foundation (for more on DuckDB as an analytics engine, see our DuckDB data warehouse guide) — though it's worth noting this is currently in beta with a waitlist. The production system still runs on ClickHouse. PostHog also offers a "Duckgres" wrapper that lets any Postgres-compatible tool connect to their warehouse, which shows they're serious about data infrastructure.

Pricing: The free tier includes 1 million warehouse rows, which means you can test this at real (small) scale before committing.

If your analytics needs are 80% product-related and you only need a few external sources, PostHog's warehouse may be genuinely sufficient. Don't over-engineer what PostHog can handle. (If you're starting from scratch, see our guide to choosing a data warehouse for startups.)

Where PostHog's warehouse hits a ceiling

PostHog's own data warehouse page includes three disclaimers that most people miss:

Data modeling: "Not quite ready for: data engineers. We recommend bringing your favorite tools like dbt for now until our tooling is more mature."

SQL editor: "Not quite ready for: data analysts. We recommend bringing your favorite tools like Hex."

Business intelligence: "Not quite ready for: data analysts. We recommend bringing your favorite tools like Hex for now until our tooling is more mature."

These aren't buried in a changelog — they're on the product page, tagged as Beta. PostHog is being unusually honest here. But read what they're actually saying: if you're a data person trying to do real analytics work, PostHog is telling you to bring your own tools for modeling, querying, and visualization. At that point, you're assembling a mini-stack anyway.

The ceiling isn't just about features that haven't shipped yet. It's structural:

No semantic layer. PostHog has event data and linked sources. It does not have centralized, governed metric definitions. When your CEO asks "what's our churn rate?" and your CFO asks the same question, they should get the same number from the same definition. PostHog doesn't enforce that. A semantic layer ensures every query, dashboard, and AI answer uses the same metric logic — it's the difference between "product analytics" and "business analytics."

The AI angle sharpens the same problem. If "MRR" is defined three different ways across joins and ad hoc SQL, an LLM will confidently produce three plausible answers. That's the same failure mode as pasting exports into ChatGPT — just with a nicer UI. AI over business data needs governed metrics first, not just a warehouse connection. PostHog's AI features are aimed at product analytics (session insights, funnels). They aren't a substitute for a semantic layer that backs business metrics across stakeholders.

Event-centric architecture. PostHog was built to track product events — page views, button clicks, feature usage. Its data model is optimized for this. Business metrics like revenue, CAC, LTV, and pipeline velocity require joining product data with billing, CRM, and financial sources under consistent definitions. You can link these sources in PostHog, but the system doesn't govern the joins or enforce consistency across them.

36 sources vs. the real world. PostHog supports 36 managed connectors. That covers the big names, but if you're pulling data from niche tools, internal APIs, or legacy systems, you'll hit gaps. Dedicated platforms offer 500+ connectors — a meaningful difference when your CFO needs data from a financial system PostHog doesn't support.

Audience mismatch. PostHog's warehouse is "perfect for product teams," per their own description. When your needs expand to executive dashboards, financial reporting, or operations analytics, you're asking a product-analytics-first system to do something it wasn't designed for. It's like using Figma as your project management tool because it happens to have commenting.

The three paths forward

If PostHog isn't enough for your business analytics needs, you have three realistic options. Each has honest tradeoffs.

Path 1: Stretch PostHog (when it's enough)

This works if:

- 80%+ of your analytics questions are product-related (funnels, retention, feature usage)

- You need basic external data (Stripe billing, HubSpot contacts) joined with product events

- Your team is small enough that one person can define metrics and everyone trusts their definitions

- You don't need governed, consistent metrics across departments

- Your data volume fits within PostHog's warehouse limits

What it costs: Nothing extra if you're already paying for PostHog. The free tier covers 1 million warehouse rows.

The risk: You'll outgrow this faster than you expect. The first time finance asks for a board-ready MRR dashboard that joins Stripe billing, HubSpot pipeline, and product usage — and nobody agrees on what counts as an "active customer" — you'll feel the ceiling.

Path 2: Build a full data stack (when you have the team for it)

This is what Reddit recommends: a warehouse (Snowflake, BigQuery), an ETL tool (Fivetran, Airbyte), a transformation layer (dbt), and a BI tool (Looker, Metabase). It's the "right" answer if you have the resources.

This works if:

- You have a dedicated data person (or team) who can set it up and maintain it

- Your data complexity justifies the infrastructure (many sources, complex transformations, strict governance)

- You have budget for 3-4 separate vendor contracts

- You can wait weeks to months for production-grade, governed analytics — a basic first dashboard might come together in a few weeks, but trusted, self-serve analytics for your whole company takes longer

What it costs: Three to four vendor contracts, each with usage-based pricing. The full cost depends on your data volume and tool choices, but the real expense is the coordination tax: keeping pipelines running, managing schema changes across tools, debugging breaks at the seams between vendors, and being the human glue that holds it together.

Worth noting: the stack is consolidating. dbt and Fivetran merged in late 2025, which reduces the number of contracts. But you still need a warehouse, BI, and a semantic layer on top.

The risk: If you're a solo data person, this path means becoming a full-time infrastructure maintainer. Every hour spent on pipeline maintenance is an hour not spent on the analysis your CEO actually hired you for.

Path 3: Use an all-in-one data platform (when you need analytics without the project)

This is the path nobody on that Reddit thread mentioned. All-in-one data platforms bundle ingestion, warehouse, semantic layer, BI, and AI into a single system. You don't assemble tools — you connect your sources and start asking questions.

This isn't a new idea, but it's a category that gets less airtime because the conversation is dominated by stack-component vendors. If you've built a data platform before and know exactly what it costs to maintain, this is what choosing differently looks like.

This works if:

- You need business analytics beyond product metrics (revenue, operations, finance)

- You don't have the team or time to build and maintain a multi-tool stack

- You want governed metrics — a semantic layer that ensures everyone gets the same number

- You need to give non-technical leadership self-serve access to data

What it costs: One vendor, one contract. Definite, for example, starts free with full platform access (AI, dashboards, semantic layer, 500+ connectors) and scales by usage credits — not by seats or tools. You're trading multiple vendor contracts and coordination overhead for a single system.

AI and the semantic layer: The reason governed metrics matter more now is AI. When leadership asks Fi (Definite's assistant) or any agent connected via the MCP server about revenue, the answer comes from the same Cube-backed definitions as SQL queries and dashboards — not a best-guess join. That's the architectural pattern this category needs: consistent definitions everywhere, including conversational interfaces. PostHog's AI stays in the product-analytics lane.

The tradeoff: You're betting on one platform instead of best-of-breed components. If the platform doesn't support a source you need or a workflow you depend on, you have less flexibility than a custom stack. On the portability side: Definite uses standard SQL and exports to common formats, so your work isn't locked in — but check the connector list and SQL access before you commit.

Why this isn't a shortcut: If you've built data platforms before, you know what the full stack costs in time, maintenance, and cognitive overhead. Choosing an all-in-one platform isn't cutting corners — it's a deliberate architecture decision. You're choosing to spend your time on analysis instead of infrastructure. That's not laziness. That's experience.

How to decide which path fits

The decision isn't about which path is "best" — it's about which constraints matter most for your team right now.

| Factor | Stretch PostHog | Build a Stack | All-in-One Platform |

|---|---|---|---|

| Analytics needs | Mostly product | Complex, multi-department | Business + product |

| Team size | 0-1 data people | 1+ dedicated data engineer | 0-1 data people |

| Time to value | Immediate (already set up) | Weeks to months | Days |

| Maintenance | Low (PostHog manages it) | High (you manage 3-4 tools) | Low (one system) |

| Governed metrics | No | Yes (with dbt + semantic layer) | Yes (built-in) |

| Connector coverage | ~36 managed sources | Depends on ETL tool (100-500+) | 500+ |

| Budget | Included in PostHog plan | $2-10K+/mo in tooling + time | Starts free, scales by usage |

| AI-ready | Product insights only | Bolt-on; you wire governance yourself | Built-in — AI and dashboards share one semantic layer |

The real question isn't "which tools?" — it's "what am I actually trying to solve?" If the answer is "I need leadership to stop Slacking me for numbers," that's a self-serve problem. If the answer is "I need consistent metrics across product, finance, and ops," that's a governance problem. If the answer is "we want AI that doesn't hallucinate three different churn rates," that's the same governance problem — semantic first, model second. The tools follow from the problem, not the other way around.

If you're not sure where your needs will land in 6 months, start with PostHog's warehouse. It's free to try, and you'll learn quickly whether the ceiling affects you. If it does, you'll know exactly what to look for in your next step — and you won't have wasted months building a stack you didn't need.

FAQ

Can I connect my production database directly to PostHog?

Yes — PostHog supports Postgres, MySQL, and MongoDB as data warehouse sources. But connecting your production database directly to any analytics tool carries performance risk. Analytical queries (aggregations, full-table scans) compete with your application's transactional workloads. If your analytics tool runs a heavy query during peak traffic, your app slows down. Use a read replica instead of connecting directly to your primary database, regardless of which analytics path you choose.

Is PostHog good enough for business analytics, or just product analytics?

PostHog is excellent for product analytics. For business analytics — revenue reporting, financial metrics, cross-departmental dashboards — it hits limitations. PostHog's own team says their data modeling, SQL editor, and BI are "not quite ready" for data analysts and engineers. If your needs are primarily product-related with some basic business metrics, PostHog works. If leadership needs governed, trustworthy business intelligence, you'll need to supplement or replace it.

What's a semantic layer and why does it matter?

A semantic layer is a centralized set of metric definitions that governs how every query, dashboard, and AI answer calculates business metrics. Without one, "revenue" might mean one thing in your product dashboard and something different in your finance spreadsheet — and an LLM will happily mirror that confusion at machine speed. PostHog doesn't have a semantic layer. Platforms like Definite (built on Cube) enforce consistent definitions across every surface — SQL, dashboards, AI — so when your CEO and CFO both ask about churn, they get the same number.

How long does it take to set up a full data stack vs. an all-in-one platform?

A basic first dashboard on a full stack (Snowflake + Fivetran + Metabase) can come together in a few weeks if you know what you're doing. But production-grade, governed, self-serve analytics for your whole company? That's months of work — and then ongoing maintenance. An all-in-one platform typically gets you from zero to working analytics in days because the components are already integrated. The tradeoff is less customization at the infrastructure level.

If you're hitting the ceiling with PostHog and don't want to build a full data stack, try Definite free — connect your sources, define metrics once, and give leadership self-serve access to the same governed numbers.